Starvation in DBMS

Introduction to Starvation in DBMS

Starvation arises in database management systems (DBMS) where particular transactions or processes are much more intensely denied access to essential system resources being continually delayed. The threshold elicits an adverse reaction in the whole DBMS performance and dependability, which can produce a lousy user experience and cause delays in operations.

Fundamentally, starvation happens when a transaction or process fails to execute even with all the things that are needed, like reaching a consensus between peers, meeting the network's requirements, or complying with policies. Such a process of fight and failure has at its roots restricted planning and approach, including problems like CPU time, memory, or disks. As an effect, afterward risky transactions or procedures do not conclude, not having the possibility to realize tasks and approach targets.

Understanding Resource Allocation

Understanding Resource Allocation

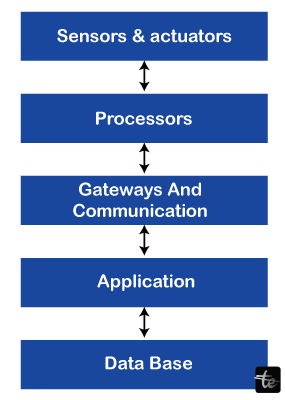

Divvying up resources of database management systems (DBMS) is paramount to successful performance and higher efficiency levels. This leads to distributing the computational resources of the system, including the CPU time, memory, disk input, and network traffic, to processes or transactions that were jostling with each other for these resources. This understanding of resource allocation will make sure that no starvation is there where any process or transaction that needs the resource is prevented from working properly.

In DBMS, resource distribution is usually managed via scheduler algorithms. These algorithms determine the sequence in which transactions and resources are granted the time needed for their use. It is desirable to attain optimal efficiency with respect to system throughput and response times and fair treatment of all tasks and threads so that no task can starve.

The resource allocation concept is a major focus in the DBMS, and one of the methods used for doing this is a priority-based scheduling system. Here, in the event of occurrence, instances for every process or transaction obtain a priority ratio based on the factors of importance, deadline, or resource requirements. After that, the manager requests prioritization of these processes/transactions in sequence providing resources to the highest priority process/transaction at any given time.

Resource allocation in the DBMS system is also perceived through the concurrency control system. Concurrency control mechanisms provide an environment where multiple transactions occurring simultaneously will not interfere with other operations' performance or cause data consistency violations. For example, locking, timestamping, and optimistic concurrency control are some of the mechanisms that utilize the management of shared resources, which in turn avoids conflicts during concurrent transactions.

Managing separately the memory allocation and dealing with the buffers and caches to get data in the fastest possible way is part of the resource allocation. DBMSs usually mean to minimize disk-IO access latency and generally aim at improving system performance through sophisticated caching mechanisms. DBMS holding the frequently accessed data saves the machine from unnecessary and expensive disk accesses and thus, enables concurrent processing and completing transactions more rapidly.

Causes of Starvation

Causes of Starvation

- Priority Inversion: Priority inversion can arise when a low-priority process locks a resource that is unnecessarily needed by a process with a higher priority. This leads to a delay in the latter transaction, and hence, the end of the whole process becomes uncertain. Starvation can happen in this case, as the treat transaction required to be on high priority would remain blocked until the other transaction releases the resource.

- Lock Contention: In multi-user systems in which transactions actually fight over common resources competing for access to those resources, interlocking conflict is inevitable. Consequently, the capability that a transaction claims to a lock resource when access to it is often denied by another transaction, which is inseparable from conflict with it, will perish. For instance, the transaction in a long-run stage where the exclusive lock is held on a special resource may prevent other transactions from accessing this resource, which results in a starvation state for those transactions.

- Resource Reservation: In some instances, the DBMS would offer the option of reserving some of the resources, such as time on the CPU, memory, or disk I/O bandwidth assigned to a specific transaction or query. Suppose a transaction book is taken into quarantine for a long period and dedicates all of the resources that it requires to the execution. In that case, there will be no resources left for other transactions to reserve, and they will cease to exist. The typical situation is when systems have resource performance-monitoring systems that need to be better configured, or the level of monitoring needs to be improved.

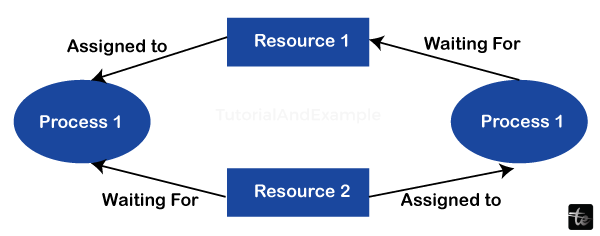

- Deadlocks: Deadlocks will happen in a situation when two or more transactions are simultaneously waiting for the same resource, which is itself being used by other transactions, creating a situation of dependency that prevents any of the transactions from progressing. Suppose a deadlock remains unresolved for a fairly long period. In that case, this can result in complete starvation for the particular transactions and in a worst-case situation - the performance of the entire system will be much lower than expected.

- Unfair Scheduling Policies: DBMSs may use one or several policies pertaining to prioritization, support for which tends to be uneven for the variety of transactions. For instance, a scheduling policy can affect scheduling decisions like giving priority to short transactions or those initiated by specific users, hence resulting in starvation to transactions with poorer priority or execution times.

Types of Starvation

Transaction Starvation

There is this problem called Transaction starvation in EOS, and it's where the transaction delays in accessing resources or their execution are consistent. This may happen because of causes like priority inversion, lock starvation, or poor resource allocation behavior. Transaction starvation drastically downplays system performance and user satisfaction issues, especially if critical tasks do not yield the expected result.

Thread Starvation

Thread starvation, for only-threads DBMSs implementation, is a state when individual threads are unable to perform operations or lack of performing, because of resource contention or poor scheduling policies. Thread starvation shows up when the threads have requests for exploitations of shared resources like CPU time, memory, and I/O operations waiting.

The extent of this problem can be huge as it leads to improper resource utilization and decreased throughput that then affects the production of the overall system.

Query Starvation

The phenomenon of query starvation manifests itself when database queries fail in execution (or prematurely free their resources) due to constraints or contention. There are three ways this can occur: a query may have a long duration because of transactions that are being held up by data locked; queries may be delayed because of limited access to system resources; or queries may become inoperable due to data that are currently being accessed by two concurrent transactions, thus rendering each of the query inoperable.

Query starvation may cause poor application performance, longer response times, and timeouts in the event the request is from the client side.

Resource Starvation

Performance bottleneck is the process where required essential system resources such as CPU, memory, or disk IO bandwidth are not sufficiently enough to accommodate active recording, queries, or other progressing processes. This leads to the need for more resources, which can increase contention for available resources, thus degrading system performance and encountering bottlenecks in data processing workflows.

Resource management and capacity planning that is well designed are necessary to control resource starvation and ensure efficiency for smooth operation.

Lock Starvation

Lack of starvation means that transactions or threads fail to get the locks that are necessary for access to updates shared memory or resources; this eventually slows down or blocks the completion of thread execution. This may occur if, for example, during some transactions, locks of the same objects are requested so often that the transactions fail one after another or if the transactions hold locks for a long time, preventing other transactions from being completed.

Memory lock starvation may cause certain performance degradation on the database and destroy its horizontal scalability.

CPU Starvation

In database CPU starvation, database processes or threads fail to get enough CPU time to perform well. The timing-out can be triggered by the CPU overloading with processing requests for concurrent transactions, queries, or system tasks, which consequently results in high response time and compromised performance.

CPU starvation can be prevented by preparing, tuning, and sizing the system configurations so that the job or workload execution is optimized.

Memory Starvation

Memory starvation appears when random I/O rates average exceed the capacity of the available system memory to hold the active pages of transactions, queries, and data buffers. As a result, the rate of paging might increase, the effectiveness of the cache may decline, and disk operations may respond slower.

Memory starvation can be solved by selecting an optimal memory usage scheme and setting adequate memory management settings; also, properly scaling memory resources is essential.

Impact of Starvation

Reduced Throughput

Slowdowns are possible in systems that access data through starvation, as transactions, queries, or waiting processes unable to proceed become abundant. Hence, delay generation in the background can cause a gradual buildup of overdue operations, leading to more latency and, finally, fewer overall system capabilities.

For example, throughput degradation can reduce the system's ability to process several requests in parallel and can instead clog performance gaps during high-volume use periods.

Increased Response Times

The phenomenon of starvation can result in the necessity for enhanced query response time and transaction processing since they might be delayed or blocked while needed resources are withdrawn. Due to this, the client request might need to be faster in getting processed, causing the loss of important data that leads to disruption of user experiences.

Longer waiting times can become a disservice factor, and this might lead to the users needing to reconnect or accept applications.

Poor Concurrency

The sharing of dynamic data can be divided among various concurrent transactions or queries, and thus, the starvation process can be halted. This consequently produces a contest among clutch, latch, and other synchronization toolkits, which in turn realizes the familiarization and further drop of serialization. Low concurrency step system scalability, throughput, and response can only get worse in high-concurrent environments.

Inefficient Resource Utilization

The slowdown of some processes, queries, or transactions can be the cause of starvation. This starvation, in turn, can be the result of some processes, queries, or transactions taking exclusive advantage of system resources at the expense of other processes, queries, or transactions that are either denied the resource or experience delays due to starvation.

This may be the case when we are using only part of the available CPU, memory, disk I/O bandwidth, or network bandwidth. In fact, the system will also be less efficient as a result. Ineffective usage of resources causes decay in the productive capacity, operation costs, and not reaching the expected performance.

Case Studies and Examples

Banking Transaction Processing

In a large financial system, the process of a number of transactions that occur simultaneously consists of deposits, withdrawals, and other money transfers. Yet, due to inappropriate resource distribution, such types of transactions could take too long to complete, thus causing a timeout or delay.

Take, for instance, a customer trying to withdraw cash from an ATM serenade. At the moment, the customer may have to wait for a long duration for the time for non-urgent transactions such as funds transfers to be completed before the real one will be completed. This may result in the serious resentment of customers and thus the spoiling of the bank's reputation.

To tackle this issue, the bank applies conditional rule schedules to guarantee that time-critical transactions like ATM withdrawals and online payments are preferred over more lenient transactions.

In this mode of operation, the bank favors different types of transactions depending on the customer profile, system overload, and so on. This helps to eliminate starvation and improve overall transaction processing efficiency.

E-commerce Order Fulfillment

An e-commerce site works in real-time and the customer expects the order to be received promptly, and the order fulfillment has to follow appropriately as well. Nevertheless, during high-demand times like meeting the race to Christmas or signing up for flash sales, the order processing system ensembles risky conditions where orders might be left jobless in a line.

It will inevitably lead to postponed order acknowledgment, shipment, and delivery, resulting in customers becoming disappointed as the sales targets become missed.

To deal with this, the e-commerce platform performs load balancing and workload management actively, which is the function of channeling incoming orders to the nodes with the lowest workload.

Through the feature of making the utilization of resources contingent on demand and the selection of orders according to variables like order value, order shipment urgency, and customer loyalty, the platform lowers the damage caused by starvation, and the order processing time is ensured.

Best Practices for Managing Starvation

- Prioritize Resource Allocation: Institute the algorithm in which tasks see full sentence completion for the sentence. Humanize the given sentence. By attaching priority levels to tasks based on aspects like ETA, significance, and effect on system performance, you'll make it doubtful that slow-priority processes will impede fast-priority ones.

- Dynamic Resource Allocation: Implement flexible resource allocation mechanisms that adapt resource use to suit system behavior when the workload is dynamic and system conditions change. Implement dynamic algorithms that adjustably select activities based on live measures like resource consumption, response time, and the amount of the system's load, which guarantee the proper use of the resources and reduce the possibility of starvation.

- Monitor System Performance: Monitor system performance metrics like CPU usage, memory utilization, disk I/O, and bandwidth to detect bottlenecks or resource contention problems early on. Enforce strengthened progress monitoring processes that will alert administrators of anything unusual or ahead-of-time shortage situations, which will be humanly intervened before the resource becomes empty.

- Optimize Task Scheduling: Maximize resource utilization and throughput with fair and equitable distribution via beneficial task scheduling performance. Instruct the system to use algorithms such as SJF, round-robin scheduling, or MLFQ to regulate the distribution of time and ensure that no task consumes a disproportionate share of the system resources and starves other tasks.

- Resource Reservation and Guarantees: Enable resource reservation policies and mechanisms in order to promise minimum service levels to the tasks and users that are considered critical. Techniques like quality of service (QoS) policies, service-level agreements (SLAs), or resource reservation can be applied to designate dedicated or bandwidth resources to high-priority tasks in time-critical events, thereby minimizing the vulnerability of starvation.

Future Trends and Considerations

- Intelligence and Machine Learning: Growing integration of AI and ML artificial intelligence methods with DBMSs allows new possibilities for agile resource provision and rational workload balancing. AI-based algorithms are able to analyze historic load performance data and help in predictive workload patterns. Furthermore, they attune the resource allocation strategy in real-time, which takes effect to prevent the starvation situation and system efficiency improvement.

- Autonomous and Self-Optimizing Systems: As computer-controlled systems attain high autonomy and self-optimization of DBMS architecture processes in real-time, systems can now be able to auto-configure resource allocation parameters in a very dynamic manner in response to the ever-changing workloads and the system. These self-operative systems utilize predictive analytics, an adaptive algorithm, and a policy engine to anticipate future arising and keep up the most efficient performance without any human supervision.

- Edge Computing and Distributed Architectures: With a growing number of edge computing and distributed architectures, the issue of managing standby across geographical distribution at the edge nodes and devices can give rise to both challenges and opportunities. Distributed DBMS solutions have the purpose of using decentralized processing, data replication, and edge caching, which lead to improving availability, reducing latency, and preventing system starvation in the limited resources of a distributed environment.

- Ethical and Regulatory Considerations: As DBMS continue to evolve and handle increasingly sensitive data, ethical and regulatory considerations around data privacy, security, and fairness become more prominent. Addressing these considerations requires a holistic approach to resource allocation that prioritizes data integrity, confidentiality, and compliance with legal and ethical standards, while also ensuring equitable access to resources and preventing discrimination or bias in resource allocation decisions.

Conclusion

In Conclusion, starvation is still a critical challenge in DBMS, and thus, there is a need to work towards finding strategic methods of overcoming it. We have examined how resource contention, scheduling algorithms, and workload prioritization can bring about the starvation of a process, thread, or computational resource. It can cause poor performance, reduced productivity and efficiency, and in the end, it will make users unhappy. Nevertheless, understanding why, what, and the impact of starvation is a step that must be a priority of all organizations; as well as implementing best practices and taking full advantage of new technologies, the risk of starvation can be reduced, and the smooth operation of DBMS can be ensured.